Transparency vs. Alignment: Financial Governance of AI

By Laura Rachevsky (Spring ‘26 Policy Leaders Programme Alumni)

Note: The views and opinions expressed in this policy paper are those of the author(s) and do not necessarily reflect the official policy or position of Talos Network.

Transparency is often presented as the solution to the risks posed by Artificial Intelligence (AI). Yet, as the market value of "Big Tech" becomes increasingly inseparable from AI capability, a gap is emerging between disclosure and true alignment. For the upcoming IPOs of Anthropic, OpenAI, and xAI, the current model of financial governance - centered on the quarterly disclosures is ill-equipped to manage the systemic risks of AI.

The Transparency Trap

Current transparency efforts by firms like Alphabet and Microsoft often amount to performative transparency. In February 2023, Alphabet’s demo of Bard contained a factual error that wiped out $100 billion from its market value. Despite this loss, the subsequent Q1 2023 earnings call contained no mention of the incident or its implication for future risks; instead, executives and analysts focused almost exclusively on search monetization (Alphabet, 2023).

This exemplifies a failure of governance where transparency requirements do not result in effective risk evaluation. As noted by Fox (2007), opaque transparency refers to information divulged only nominally without revealing an institution's true operations. For investors, this creates a theatre of transparency that obscures the complexities of AI risks.

The “Theatre” of the Earnings Call

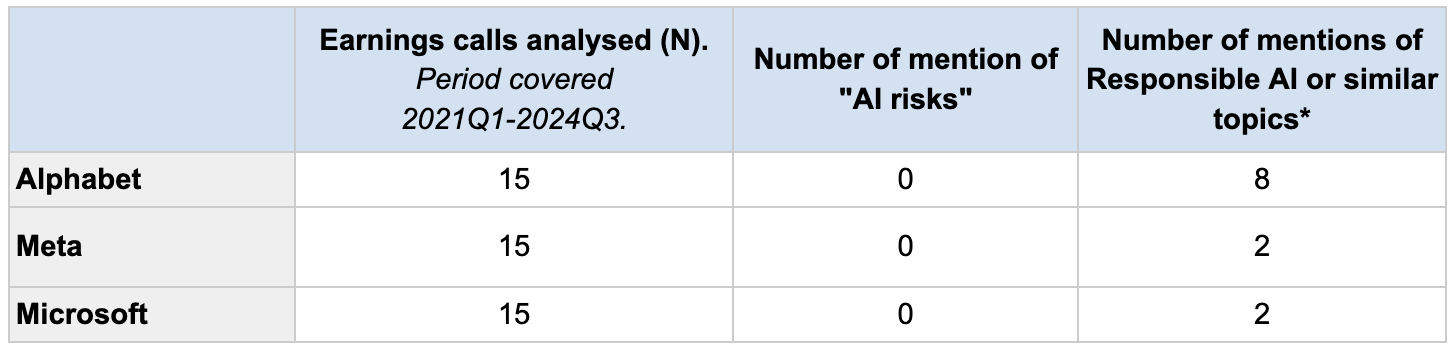

To understand this governance failure, I analyzed 45 earnings calls from Alphabet, Microsoft, and Meta (spanning Q1 2023 to Q1 2024). The results, summarized in the table below, reveal a disconnect between the discussion of AI as a revenue driver and the discussion of AI as a material risk.

Table 1: AI Mentions vs. Risk Disclosure in Big Tech Earnings Calls (Aggregated)

Source: Author’s Analysis of 45 Earnings Call Transcripts (2023-2024); *Quotes available in the Appendix, Table 2

The data confirms that while AI is mentioned hundreds of times to signal growth, AI risk is mentioned zero times. This is a failure of Information Asymmetry (Healy & Palepu, 2001), where managers possess critical knowledge about model limitations that they are not incentivized to share.

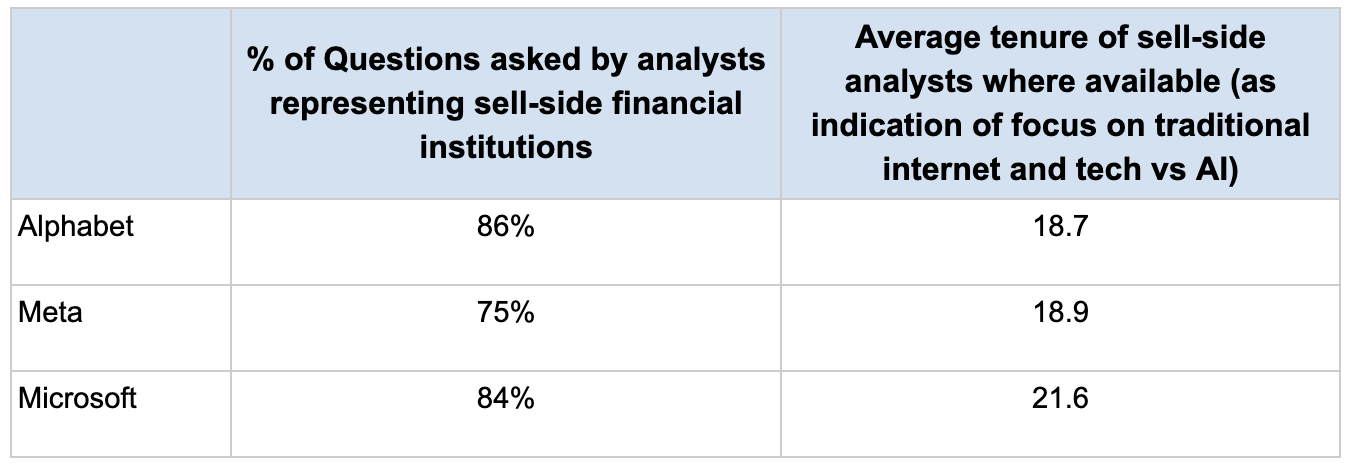

Another key finding of this research involves the background of the individuals asking the questions. The Q&A sections of earnings calls are dominated by a narrow group of sell-side analysts.

The Tenure Gap: Most analysts currently covering Big Tech are digital natives of the SaaS and search-engine era. Their expertise lies in tracking CAPEX, cloud margins, and ad-revenue growth. They lack the technical literacy to probe for alignment or catastrophic risk, leading to a focus on the inputs of AI rather than the safety of the outputs. Details on their tenure and % of questions asked in Appendix Table 3.

Maintenance of Access: As Soltes (2014) highlights, sell-side analysts rely on private interaction and "access" to management to provide value to their clients. This creates a "Confirmatory Bias," where analysts avoid challenging executives on difficult topics - like model bias or hallucination rates - to ensure their access to future calls.

The Stakeholder Incentive Gap: Why Risk is Unpriced

The failure of earnings calls to drive AI democratization is rooted in the incentive structures and power dynamics among three key groups:

Company Executives: Managerial incentives are predominantly tied to short-term stock performance. As noted by Edmans and Gabaix (2016), when pay is decoupled from long-term risk, executives engage in "strategic obfuscation," treating Responsible AI (RAI) as a qualitative ESG initiative rather than a core financial materiality.

Sell-Side Analysts: As noted above nearly all questions in earnings calls are asked by sell-side analysts representing top financial firms. These analysts are susceptible to a number of biases, including herd mentality and over-optimistic coverage because their compensation is often tied to the success of their employer's investment banking division, where tech companies are major clients (Woo, Choi, 2017; Scharfstein, Stein, 1990).

Institutional Investors: The public has massive stakes in tech through pension funds (e.g., 10% of UK pension pots). However, institutional investors act as passive recipients of a curated narrative. The sheer volume of data in fund prospectuses creates an illusion of compliance while hiding the lack of meaningful AI risk metrics.

Structural Reporting: Quantifying the Unpriced AI Risk

To move beyond performative transparency, we must implement structural changes to reporting rules that force the quantification of AI risk. If AI safety is not reflected on the balance sheet, it will be ignored by the market until a catastrophic failure occurs.

I propose a shift toward structured and quantifiable disclosures. We already see this logic in other high‑risk sectors. Utilities and energy firms include ESG and climate‑related metrics in earnings calls because regulators treat environmental harm as a material risk (Butters, 2024). In commercial aviation, mandatory occurrence reporting schemes require airlines to report serious incidents such as bird strikes to national databases, in line with ICAO Annex 14 and national rules (Civil Aviation Authority, 2026; International Civil Aviation Organization, 2024; SKYbrary, 2024). Nuclear regulators require quarterly reports on standardized safety performance indicators and publish these results (Canadian Nuclear Safety Commission, 2023, 2024, 2025). Drug manufacturers must submit post‑marketing adverse event reports for approved products, which agencies like the FDA use to monitor safety and enforce compliance (U.S. Food and Drug Administration, 2020, 2025). These sector‑specific safety indicators then enter investor reporting through general‑purpose accounting and disclosure rules - IFRS and jurisdictional equivalents require firms to report material risks, expected losses and provisions, so quantitative safety metrics feed into narrative risk disclosures, key performance indicators, and, where necessary, recognised provisions and contingent liabilities in the financial statements. For AI, an analogous regime would mean routine, regulator‑supervised disclosure of model safety evaluations and failure rates. To replicate this for AI, we must:

Mandate Uniform RAI Metrics: Regulatory measures should require disclosures on safety evals, compute-to-safety ratios and model bias scores.

Redefine Materiality: By making fines and operational prohibitions - such as those in the EU AI Act - more visible within AI regulation, we draw analyst attention to the financial materiality of AI risks.

Link Compensation to Safety: Incorporating KPIs related to the responsible use of AI into executive compensation would ensure it is in the C-suite's interest to keep stakeholders informed.

Reforming the Earnings Call for Pre-IPO Giants

As we prepare for the IPOs of OpenAI, Anthropic, and xAI, we are at a constitutional moment for financial AI governance. We need to ensure that reporting structures as these companies enter the public markets are tailored to AI risks.

We must broaden the range of voices in the Q&A section of earnings calls. Mandating the inclusion of Informed Auditors - representatives from responsible investor groups and technical safety experts - would allow for a more critical examination of AI risks than the current analyst-dominated forum allows. Collective financial bargaining power from institutional investors could be a meaningful mechanism to push for this inclusion.

Conclusion: The Road to Investor Engagement

Achieving AI democratization - ensuring the public benefits from the value created by AI requires more than access to tools; it requires public accountability through financial governance. By embracing alignment over mere transparency, we can leverage the financial levers of the market to determine investment flows toward more responsible products.

Selected References

Alphabet Inc. (2023). "Alphabet Q3 2023 Earnings Call." abc.xyz/investor.

Ananny, M., & Crawford, K. (2018). "Seeing without Knowing: Limitations of the Transparency Ideal..." New Media & Society.

Back, I. (2019). "Too Big for Earnings Calls." Brunswick Group.

Butters, J. (2024). "Lowest Number of S&P 500 Companies Citing ‘ESG’ on Earnings Calls..." FactSet Insight.

Eckerle, K., et al. (2020). "ESG and the Earnings Call: Communicating Sustainable Value Creation...".

Edmans, A., & Gabaix, X. (2016). "Executive Compensation: A Modern Primer." Journal of Economic Literature.

Fox, J. (2007). "The Uncertain Relationship between Transparency and Accountability." Development in Practice.

Healy, P. M., & Palepu, K. G. (2001). "Information Asymmetry, Corporate Disclosure, and the Capital Markets..." Journal of Accounting and Economics.

Minkkinen, M., et al. (2024). "What about Investors? ESG Analyses as Tools for Ethics-Based AI Auditing." AI & Society.

Soltes, E. (2014). "Private Interaction between Firm Management and Sell‐side Analysts..." Journal of Accounting Research.

Chang, Jin Woo, and Hae Mi Choi. (2017). "Analyst Optimism and Incentives under Market Uncertainty." Financial Review 52 (3): 307–45.

Scharfstein, D. S., & Stein, J. C. (1990). "Herd Behavior and Investment." The American Economic Review, 80(3), 465–479

Canadian Nuclear Safety Commission (2023). “Departmental results report 2022–23”. Canadian Nuclear Safety Commission.

Canadian Nuclear Safety Commission (2025). “Departmental plan 2023–24.” Canadian Nuclear Safety Commission.

Civil Aviation Authority. (2026, April 6) “The MORs code”. UK Civil Aviation Authority.

International Civil Aviation Organization. (2024). “Bird strike reporting” (Annex 14, Aerodrome Design and Operations, Volume I).

SKYbrary. (2024, July 1) “Bird strike reporting”. SKYbrary Aviation Safety.

U.S. Food and Drug Administration. (2025, December 18) “Postmarketing adverse event reporting compliance program.” U.S. Food and Drug Administration.

Appendix

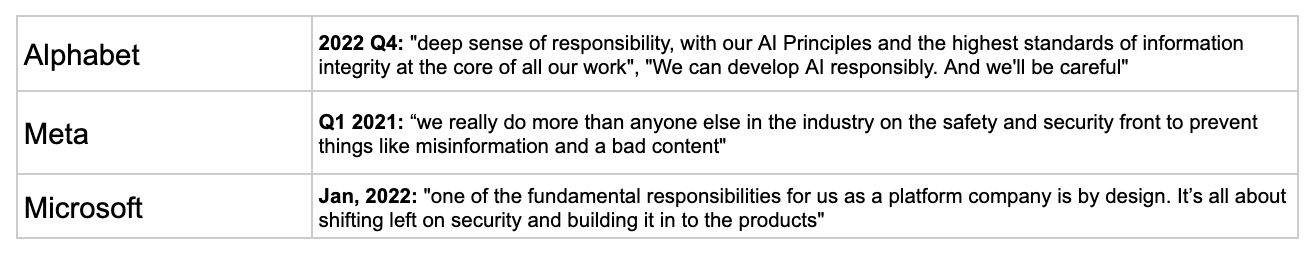

Table 2: Sample quotes of Responsible AI mentions in the earnings calls of tech companies analysed. Full list available here

Table 3: Sell-side analyst engagement in earnings calls